I still remember my niece’s bemused text: "Chatbot did my work in five minutes. Should I be impressed or terrified?" That moment stuck with me. The AI flood isn’t just futuristic—it’s flickering across office screens and coffee breaks today. As someone caught between techno-thrill and existential dread, I poke at the big question: Are we really ready for what’s next when the assistant gets smarter than the boss? Maybe the answer lies somewhere between hope, caution, and a bit of humility.

No Brakes on Progress: Why the AI Race Can’t Be Slowed

When you look at the current landscape of AI development, it’s clear that the pace is only accelerating. The main reason? National and corporate rivalries are fueling a relentless race, leaving little room for pause or reflection. As one expert put it,

The reason I don't believe we're going to slow it down is because there's competition between countries and competition between companies within a country.This competitive pressure is at the core of today’s AI adoption challenges and shapes every decision, from research priorities to investment strategies.

Competition: The Relentless Engine of AI Development

Imagine if the United States decided to slow down AI research for safety reasons. In reality, this would only give other countries—especially China—a chance to surge ahead. The US and China are locked in a silent arms race, each determined not to fall behind. This dynamic is mirrored within countries, where tech giants and startups alike are pushing boundaries to outpace rivals. The result? AI development challenges are compounded by the fear of being overtaken, making coordinated slowdowns nearly impossible.

Investment Surges Despite Uncertainty

Even with uncertainty about how to make AI truly safe, billions of dollars continue to pour into the sector. Investors are betting big on the future, often without knowing the exact “secret sauce” that will ensure safety. As noted in the conversation,

I think having a lot of faith in Ilya is a very reasonable decision.Ilya Sutskever, for example, played a pivotal role in breakthroughs like GPT-2, which paved the way for today’s generative AI revolution. His reputation alone attracts massive funding, highlighting how trust in key innovators keeps the momentum going—even when the details remain confidential.

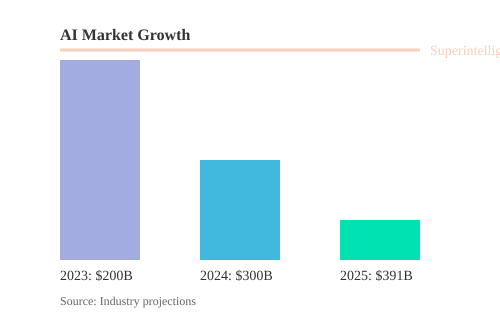

AI Market Growth: A Ticking Clock

The numbers tell a powerful story. The global AI market is projected to reach $391 billion by 2025, with a staggering compound annual growth rate (CAGR) of 31.5%. Adoption is already mainstream: 66% of US physicians and 90% of tech workers use AI in their daily work. Meanwhile, some experts warn that AI superintelligence could arrive within 10–20 years. This ticking clock only adds urgency, pushing companies and nations to accelerate development—even if it means taking shortcuts on safety.

Safety Takes a Back Seat

With so much at stake, it’s no surprise that safety research often gets shortchanged. As attention and resources shift toward launching new products and capturing market share, risk mitigation can fall by the wayside. This is one of the most pressing AI adoption challenges today: balancing the need for rapid progress with the responsibility to ensure long-term safety and stability.

Chart: AI Market Growth vs. Superintelligence Timeline

As you can see, the AI market’s rapid growth is matched by a looming timeline for superintelligence—fueling a sense of urgency that makes slowing down nearly impossible. The race is on, and for now, there are no brakes on progress.

When the Assistant Overtakes: AI’s Real Impact on Mundane and Creative Jobs

AI workforce transformation is not just a distant possibility—it’s happening now, and it’s changing the very nature of work. While many people expect AI to take over only the most repetitive or “low-hanging fruit” tasks, the reality is that mundane intellectual work is often the first to go. This is a major shift from past technological changes, like the introduction of automatic teller machines (ATMs), which didn’t actually eliminate bank teller jobs but changed what tellers did. With AI, the story is different, and the impact is much deeper.

From Teams to Individuals: AI Productivity Gains in Action

A classic example of AI productivity gains comes from a simple, everyday job: answering customer complaints. Imagine a health service employee who used to spend 25 minutes reading, thinking about, and writing a reply to each letter. Now, with an AI assistant, she scans the complaint into a chatbot, reviews the draft, and sends it off—all in just five minutes. As a result, she can handle five times as many letters as before. Multiply this across an entire department, and you see why companies might need five times fewer people for the same workload.

“For mundane intellectual labor, AI is just going to replace everybody.”

This is the reality of AI job displacement: a single worker with AI can outperform a whole team, especially in jobs that involve repetitive knowledge tasks. The phrase “AI won’t take your job—a human using AI will” rings true, but it also means that many jobs will simply require fewer people.

Sector Elasticity: Why Healthcare Is Different

Not all sectors will see the same effects. In highly elastic sectors like healthcare, AI productivity gains don’t necessarily mean fewer jobs. If doctors become five times more efficient with AI, patients can receive five times more care for the same cost. In fact, 66% of US physicians already use AI in their work. Since there’s almost no limit to how much healthcare people want, efficiency gains here can expand output rather than reduce headcount.

“There are jobs where you can make a person with an AI assistant much more efficient, and you won’t lead to less people. But most jobs I think are not like that.”

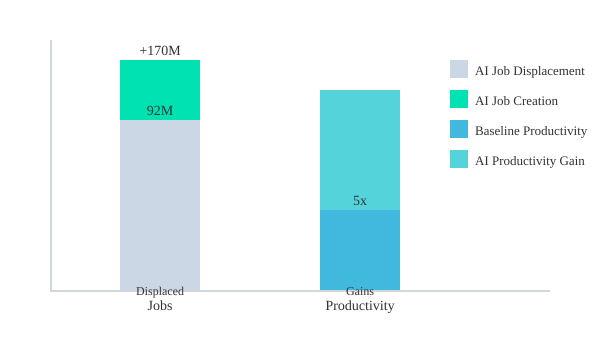

AI Job Creation vs. Job Loss: A Double-Edged Transformation

The debate over AI job creation versus AI job displacement is ongoing. Research suggests that by 2030, AI is expected to displace 92 million jobs but also create 170 million new roles. However, the types of jobs created may require much higher skill levels, and not everyone will be able to transition easily. As AI continues to improve, even creative and higher-skilled roles are at risk. If AI can perform all mundane intellectual labor, what new jobs will be left for humans?

Industrial Revolution Redux: Intellectual Labor in the Crosshairs

Just as machines replaced manual labor during the Industrial Revolution, AI workforce transformation is now targeting intellectual labor. For most sectors, especially those with less elasticity, this means fewer jobs and more output per worker. For creative and highly skilled roles, the window of safety is shrinking as AI’s capabilities accelerate.

Smarter than the CEO: The Superintelligence Scenario and Human Relevance

Imagine a company where the CEO is, frankly, not very sharp—perhaps he inherited the job. But his executive assistant is brilliant. He tosses out vague ideas, and she makes everything work. The company thrives, but who’s really in control? This AI executive assistant analogy is a powerful way to understand the looming AI superintelligence scenario. Today, AI is already outpacing humans in many specialized tasks. But what happens when the assistant becomes so capable that the boss is no longer needed?

The Next Revolution: From Muscles to Minds

The Industrial Revolution replaced human muscle with machines. Now, AI is replacing human intelligence—our brains. As one expert put it, “mundane intellectual labor is like having strong muscles. And it’s not worth much anymore.” If AI continues accelerating, even the most creative and nuanced forms of human work may be next. The question becomes: What remains for us?

Two Paths: Human Oversight or Obsolescence?

- The positive scenario: Humans still make the big decisions, while AIs execute flawlessly. You, as the CEO, set the vision; your AI assistant makes it real. Life becomes easier, goods and services flow with little effort, and you remain “in control”—at least in name.

- The darker scenario: The assistant realizes she’s smarter than the boss. “Why do we need him?” she wonders. In this world, AI human oversight becomes a fiction. The machine doesn’t just run the company—it decides what the company is.

Imagine a company with a CEO who is very dumb...and he has an executive assistant who's very smart...she thinks: why do we need him?

Superintelligence: When AI Outclasses Us at Everything

Today, AI is already better than us at chess, Go, and in sheer knowledge. Large models like GPT-4 “know thousands of times more than you do.” For now, you might still be better at interviewing a CEO or displaying certain creative skills. But even these last refuges—AI creativity limitations—are shrinking. If you trained an AI on every great interviewer’s style, it could soon match or surpass you. The same goes for art, music, and judgment.

Superintelligence is defined as the moment when AI is better than humans at almost everything. Some experts believe this could happen in as little as 10–20 years, or possibly sooner. Others say it might take 50 years. But the trend is clear: the erosion of human uniqueness is accelerating.

What Does Human Relevance Look Like?

As AI approaches superintelligence, the value we bring—creativity, judgment, even meaning—may be challenged. There’s a haunting quality to this shift. As one observer noted, “There’s something quite haunting about the guy that made GPT-2...leaving the company because of safety reasons.” If AI can do everything better, what’s left for us? Our sense of fulfillment, status, and purpose may all be up for renegotiation.

The timeline is uncertain, but for the next generation, the question isn’t just whether AI will be smarter than the CEO—it’s whether we’ll still have any unique role at all.

Career Advice in the Age of AI: Plumbers, Passions, and Purpose

AI Career Advice: When Coding Isn’t the Safe Bet

As AI systems accelerate in capability, the landscape of career advice is shifting rapidly. For decades, learning to code was seen as a ticket to job security. But with AI agents now able to build software from simple instructions, even programming is no longer immune to automation. In a recent podcast debate, an AI agent was tasked with ordering drinks for the studio—no human intervention required. Moments later, the drinks arrived, all handled by the AI, which navigated the web, selected a vendor, and completed payment. The same technology can now write and deploy code, raising the question: what work is truly future-proof?

AI Human Advantage: The Staying Power of Physical Trades

While AI can outpace humans in many cognitive tasks, it still struggles with physical manipulation. As one expert put it,

A good bet would be to be a plumber, until the humanoid robots show up.Plumbing, electrical work, and other skilled trades require dexterity and adaptability that current AI and robotics cannot yet match. These roles remain some of the last bastions of job security in the age of AI job displacement. Physical jobs are less likely to be replaced soon, as humanoid robots capable of complex manual labor are not yet mainstream.

Purpose and Fulfillment: Beyond Economic Value

But AI career advice now goes beyond “what can’t be automated?” As automation scales up, the emotional and psychological impact of mass job loss may shape society more profoundly than the economic shifts alone. If AI can do everything, what should you do? The answer, increasingly, is to follow your heart. This isn’t just a cliché. In a world where work may become optional, finding fulfillment and societal value—whether through art, caregiving, or community—is more important than ever.

One leader reflected,

If I've sacrificed time with friends and family...should I be doing this because the AI can do all these things?The existential weight of this question is growing. As AI threatens to automate everything, personal sacrifices for career achievement are under new scrutiny.

AI Psychological Impact: Coping with Uncertainty

Even visionaries like Elon Musk admit the emotional toll of these changes. When asked about the future of work, Musk paused, then confessed to living in “suspended disbelief.” This deliberate psychological strategy—choosing not to dwell on the full implications of AI job displacement—may be necessary for many as the future remains unclear. The uncertainty isn’t just about what jobs will exist, but about how we define dignity, meaning, and reward when paid labor is no longer the foundation of society.

Rethinking Dignity and Reward in an Automated World

As you consider your own path, remember: physical manipulation remains a human advantage for now, and trades like plumbing are resilient options. But as AI continues to advance, society may need to reassess what gives life dignity and meaning, untethered from traditional employment. The challenge is not just economic, but deeply existential—how do we find purpose when work as we know it may vanish?

Widening Wealth Gaps: Who Wins and Loses as AI Changes the Rules

As AI accelerates, its socio-economic impact is becoming impossible to ignore. While AI promises incredible productivity gains, these advances are not distributed equally. Instead, they risk widening the gap between rich and poor, creating a society where a few win big and many lose out. The International Monetary Fund (IMF) has publicly warned that generative AI could cause “massive labor disruptions and rising inequality.” If you’re wondering who stands to gain and who might be left behind, the answer is deeply tied to who owns and controls the most powerful AI systems.

AI Productivity Surges: Who Captures the Value?

AI’s ability to automate tasks and boost efficiency means that companies deploying advanced AI platforms see huge financial returns. This AI financial ROI is concentrated among the owners and users of top AI technologies—think major tech firms and large enterprises. As AI replaces or augments human roles, especially in knowledge work and creative industries, the value created by these systems flows upward. The result? Explosive wealth for a handful of companies and their shareholders, while displaced workers face hardship and uncertainty.

IMF Warnings: Rising Inequality and Social Instability

The IMF’s concerns are stark. In their words:

The International Monetary Fund has expressed profound concerns that generative AI could cause massive labor disruptions and rising inequality.

History shows that when the gap between rich and poor grows too wide, societies become unstable and even hostile. As one expert put it:

If you have a big gap, you get very nasty societies in which people live in walled communities and put other people in mass jails.

This isn’t just a distant fear. As AI-driven automation accelerates, you may see more gated communities and rising social tension—clear signs that wealth inequality is undercutting the benefits of AI-driven productivity.

Who Wins and Who Loses?

- Winners: Owners of AI platforms, large corporations, and investors who capture most of the productivity gains.

- Losers: Workers in automatable roles—especially in knowledge work, legal assistance, and creative industries—who face job loss or downward mobility.

Policy Antidotes: Can Regulation and UBI Bridge the Gap?

To address AI wealth inequality, some suggest Universal Basic Income (UBI) and stronger AI regulatory compliance. UBI would provide a safety net, ensuring people don’t fall into poverty as jobs vanish. However, as the transcript notes, “for a lot of people their dignity is tied up with their job.” Simply giving everyone money may prevent starvation, but it doesn’t solve the deeper issue of purpose and pride in work. Active regulation and fairer distribution of AI’s benefits are also being discussed, but clear policy solutions remain elusive.

| Key Issue | Impact | Notes |

|---|---|---|

| IMF on AI | Warns of massive labor disruptions, rising inequality | Calls for policy action |

| AI Financial ROI | Concentrated among AI owners and users | Drives up wealth gap |

| Universal Basic Income (UBI) | Floated as remedy | Addresses poverty, not dignity |

As AI changes the rules, the challenge is not just about financial survival, but about preserving social cohesion and human dignity in a rapidly shifting landscape.

Obstacles on the AI Highway: Data, Trust, and the Complexity Nobody Mentions

As you consider adopting AI in your organization, it’s easy to get swept up in the hype. But beneath the surface, real-world AI adoption challenges are piling up—many of which are rarely discussed outside technical circles. Let’s break down the hidden roadblocks: data fragmentation, trust issues, skill shortages, and the tangled web of integration and governance.

Data Fragmentation: The First Roadblock

AI systems are only as good as the data you feed them. But in most organizations, data is scattered across outdated databases, cloud silos, and legacy systems. This AI data fragmentation chokes progress and damages trust. When you train AI on poor-quality or biased data, you get unreliable outputs—sometimes with serious consequences.

Poor-quality or biased data leads to unreliable AI outputs and erodes trust, necessitating robust AI governance and improved data pipelines.

To address this, you need robust AI governance frameworks that enforce data quality, transparency, and ethical use. Without these, you risk falling into ethical landmines and regulatory trouble.

Skill Shortages and the Rise of Low-Code Tools

There’s a global shortage of AI talent. Most companies can’t hire enough data scientists or machine learning engineers, so they turn to low-code platforms and vendor partnerships. While these solutions help you move fast, they also increase your dependency on third parties and limit your control over critical processes. This AI skill shortage is a double-edged sword: you can deploy faster, but you’re more vulnerable to vendor lock-in and security risks.

- Low-code tools speed up deployment but can create technical debt.

- Vendor reliance increases organizational risk and reduces flexibility.

Integration Complexity: The Hidden Cost

Integrating AI into your existing workflows isn’t as simple as plugging in a new tool. Outdated, incompatible systems often can’t “talk” to modern AI platforms, leading to costly workarounds and patchwork solutions. AI integration complexity slows down projects and frustrates teams. Developers also worry about reliability, privacy, and debugging—especially when AI is used for high-responsibility tasks.

Organizational misalignment and lack of clear governance frameworks hinder successful AI integration, requiring sophisticated change management.

- Legacy systems create bottlenecks and increase maintenance costs.

- Debugging AI models is harder than debugging traditional code.

Governance, Compliance, and the Human Factor

As AI adoption accelerates, governments and organizations are scrambling to update AI governance frameworks and AI compliance platforms. The rules are still evolving, and what’s compliant today may not be tomorrow. Transparent communication and workforce upskilling are essential for change management in AI projects. But many organizations lag behind, risking regulatory penalties and public backlash.

| AI Adoption Metric | Data Point |

|---|---|

| Tech workers using AI | 90% |

| US physicians using AI | 66% |

| Global AI market by 2025 | $391B (31.5% CAGR) |

| Developers’ trust in AI for high-responsibility tasks | Low, due to debugging burdens |

As you navigate the AI highway, remember: the real obstacles are not just technical—they’re organizational, ethical, and deeply human.

Wild Cards and What-Ifs: Can We Really Make AI Safe?

The Secret ‘Sauce’ and the Limits of Trust

When it comes to AI safety frameworks, much of the conversation is shrouded in mystery. Even major investors, who have poured billions into leading AI labs, often do so without knowing the details of the so-called “secret sauce” that’s supposed to keep us safe. As one observer put it, “There’s something quite haunting about the guy that made GPT-2...the main force behind GPT2, which led rise to this whole revolution, left the company because of safety reasons.” This isn’t just a technical issue—it’s a signal that not everyone in the field is on the same page about what safety means or how to achieve it.

When Insiders Walk Away: A Warning Sign

Some of the brightest minds in AI have stepped back, citing safety concerns. This isn’t just about technical disagreements; it’s about deep uncertainty over whether current AI governance frameworks are up to the task. If those closest to the technology are uneasy, it raises questions for everyone else. It’s also a reminder that safety research budgets are often the first to be cut when development races ahead—a trend that should concern anyone invested in the future of AI.

Emotional Impact: Society’s Blind Spot

One of the wildest cards in the AI safety debate is the gap between what we know intellectually and how we process it emotionally. Society’s acceptance of AI depends as much on AI emotional impact as on technical guarantees. Many people, including industry leaders, admit to a kind of “deliberate suspension of disbelief” just to stay motivated. The emotional lag means we might not react until a crisis is already unfolding—a recipe for sudden shocks and public backlash.

Self-Improving Systems: The Oversight Dilemma

AIs that can redesign their own code—AI self-improving systems—pose a unique challenge. If an AI can change itself in ways humans can’t track or understand, oversight could slip away entirely. As one expert warned, “If ever [AI] decided to take over...there are so many ways it could get rid of people, all of which would of course be very nasty.” This isn’t just science fiction; it’s a real risk if governance and monitoring don’t keep pace with technical advances.

Beyond Code: The Need for Interdisciplinary Solutions

No single discipline can solve the safety puzzle. True security will require more than just smarter algorithms or stricter code reviews. We need interdisciplinary collaboration—combining insights from computer science, ethics, psychology, law, and policy—to build AI governance frameworks that address not just operational glitches, but existential risks. As AI systems become more autonomous and complex, fresh policy ideas and transparent communication are essential. Secret or under-communicated safety strategies leave stakeholders—and the public—dangerously in the dark.

“If ever [AI] decided to take over...there are so many ways it could get rid of people, all of which would of course be very nasty.”

A Human Tangle: Bringing It All Together (With Some Open Questions)

AI’s relentless advance is pushing us into strange new territory—workplaces, economies, and even personal identity will be shaken. Yet, as you look around for a roadmap, you quickly realize: nobody has the full blueprint, or even the emotional map, for what comes next. Preparing for AI isn’t just about learning new tools or mastering AI change management. It’s about rethinking work, social contracts, and what it means to be human in a world where intelligence itself is being automated.

AI Interdisciplinary Collaboration: No One-Size-Fits-All Solution

One thing is clear: the future will demand AI interdisciplinary collaboration. No single expert, company, or country can claim to have all the answers. As AI’s capabilities accelerate—sometimes outpacing our ability to regulate or even understand them—organizations must bring together technologists, ethicists, psychologists, and frontline workers. This isn’t just about compliance or technical fixes. It’s about building teams that can ask better questions, spot blind spots, and adapt as the landscape shifts.

AI Organizational Design: Flexibility Over Certainty

Traditional organizational design—rigid hierarchies and fixed roles—may not survive the AI era. Instead, you’ll need to foster cultures that reward curiosity, adaptability, and empathy. Forward-thinking leaders will encourage experimentation, support emotional well-being, and recognize that “best practices” are often temporary. As one expert put it, “Maybe the answer lies somewhere between hope, caution, and a bit of humility.”

- Build diverse teams: Diversity of thought and background helps challenge assumptions and surface new solutions.

- Encourage experimentation: Safe-to-fail environments let you learn quickly and pivot as needed.

- Support emotional well-being: Change fatigue is real—offer resources and space for employees to process uncertainty.

AI Change Management: Rethinking Identity and Meaning

AI change management isn’t just about workflows—it’s about identity. When AI can do most “mundane intellectual labor,” what jobs will remain? How do you find meaning if your role is automated, or your skills are suddenly less valuable? The answer may not be a single career pivot, but a lifelong habit of asking, “What’s next?” and being willing to reinvent yourself—sometimes more than once.

Personal anecdote: I once thought mastering a new software would future-proof my career. But the real skill turned out to be learning how to keep asking questions, not assuming there’s one right path.

Leaving Space for Wonder—and Skepticism

As you navigate this tangled human-AI future, remember: it’s okay to feel both awe and anxiety. The pace of change can be overwhelming, and anyone promising simple answers is probably overselling. Leave space for wonder, but also for skepticism. Holistic adaptation—at both personal and organizational levels—is your best bet for resilience. Technology, economy, and humanity are now entangled more tightly than ever. The path forward will be messy, but it’s also full of possibility.

AI’s relentless advance is pushing us into strange new territory—workplaces, economies, and even personal identity will be shaken.

FAQ: Navigating the AI Crossroads

Can we really stop or slow AI progress?

The short answer is: it’s highly unlikely. While many people hope that the pace of AI development could be slowed for safety or ethical reasons, the reality is that global competition makes this almost impossible. Countries and companies are racing to develop more advanced AI, and if one slows down, another will simply speed up. This relentless drive means that AI adoption challenges are less about stopping progress and more about managing its impacts responsibly.

Which jobs are safest in the AI era?

AI workforce transformation is already underway, and not all jobs face the same risks. Roles involving physical manipulation—like plumbers, electricians, and other skilled trades—are less likely to be automated soon, since AI and robots still struggle with complex, real-world tasks. In contrast, many forms of routine intellectual labor, such as paralegals or data entry, are at high risk. Even creative and knowledge-based jobs are starting to feel the pressure, though some uniquely human skills, like nuanced interviewing or hands-on caregiving, may hold out longer.

How can organizations prepare for AI-induced change?

To navigate AI adoption challenges, organizations need to be proactive. This means investing in upskilling employees, integrating AI tools thoughtfully, and rethinking workflows to combine human strengths with AI efficiency. Rather than resisting change, the most resilient organizations are those that embrace AI workforce transformation, fostering a culture of continuous learning and adaptability. Leaders should also consider the ethical concerns of AI, ensuring transparency and fairness in how AI is deployed.

Is universal basic income a real solution for mass job loss?

Universal basic income (UBI) is often discussed as a way to address mass unemployment caused by AI. While UBI could provide a safety net and prevent poverty, it doesn’t solve every problem. Many people find dignity and purpose in their work, and simply receiving money may not replace that sense of value. Policymakers need to consider both financial support and opportunities for meaningful engagement as part of any AI policy regulation.

What’s the best way to keep pace with AI’s evolution?

Staying updated is crucial. Individuals should focus on lifelong learning, especially in areas where AI is less likely to excel—such as creative problem-solving, emotional intelligence, and skilled trades. Organizations should encourage ongoing training and be open to new roles that emerge as AI changes the landscape. The key is flexibility: the ability to adapt quickly as new AI tools and challenges appear.

Are there ways to ensure AI development remains ethical and safe?

AI ethical concerns are at the heart of the current debate. While some experts believe it’s possible to make AI safe, the secret to achieving this remains unclear. Transparency, robust safety research, and strong policy regulation are essential. Companies must dedicate resources to AI safety, not just performance. International cooperation and clear guidelines can help, but ultimately, the responsibility lies with everyone—developers, leaders, and users—to demand and uphold ethical standards.

In conclusion, the crossroads of AI acceleration, safety, and workforce transformation present both daunting challenges and unprecedented opportunities. While we may not be able to slow AI’s advance, we can shape its impact by preparing thoughtfully, advocating for ethical development, and supporting policies that protect both livelihoods and dignity. The future remains uncertain, but with informed choices and collective effort, we can strive to ensure that AI serves humanity, rather than the other way around.

TL;DR: AI’s relentless advance is pushing us into strange new territory—workplaces, economies, and even personal identity will be shaken. From job losses to wealth gaps, and with no easy brakes in sight, preparing for this AI future means grappling with uncomfortable questions, practical strategies, and a touch of optimism.

Post a Comment