A decade ago, I thought I knew technology. Then AI happened. Somewhere between feeding it my favorite playlist and demanding it edit my rambling resume, I realized I was talking to a machine in a language it didn’t understand. Fast forward 20 years, and I’ve built billion-dollar companies, served on boards, and invested in disruptive AI startups. But the biggest insight? Most people approach AI all wrong—stuck with half-baked prompts and generic outputs. Today, I’ll walk you through a roadmap not just to literacy, but to conversational mastery—a journey shaped by missed beats, unexpected analogies (Humpty Dumpty, anyone?), and sharp little shortcuts I wish I’d had myself. Let’s get creative and actually outsmart the algorithms.

Week One: Speaking Machine – How I Unlearned English to Master AI

The Humpty Dumpty Revelation: Why AI Isn’t Guessing Like a Person

“Most people using AI are doing it wrong.” That was my wake-up call after two decades in tech. I realized that the secret to the AI mastery roadmap 2025 isn’t about knowing more English—it’s about unlearning it. The way we talk to people doesn’t work for Generative AI. Why? Because AI doesn’t understand language like we do. It predicts it, like autocomplete on steroids.

Let’s break it down with the classic nursery rhyme: If I say, “Humpty Dumpty sat on a...,” your brain instantly fills in “wall.” Not because you’re thinking hard, but because you’ve seen it before. AI works the same way. It predicts what comes next based on patterns, not understanding.

Tokens, Vectors, Probability: Decoding the Strange Logic of ChatGPT and Gemini

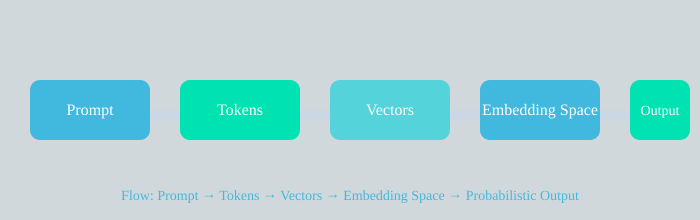

Here’s what happens under the hood of Generative AI like ChatGPT or Gemini:

- Step 1: Tokenization – AI breaks your prompt into tokens. “Humpty” is one token, “Dumpty” another, “sat,” “on,” “wall”—each is a token.

- Step 2: Vectorization – Each token becomes a list of numbers (a vector) in a huge mathematical “embedding space.”

- Step 3: Proximity & Probability – Similar ideas (like “Humpty,” “egg,” “wall,” “fall”) cluster together. When you prompt, AI predicts the next most likely token, not from memory, but from probability.

That’s why AI can feel both smart and alien. It’s not storing answers—it’s generating them on the fly.

Machine English: Crafting Prompts AI Can Truly Compute

Here’s the big mistake: Most people talk to AI like it’s a person. But prompt engineering is a science. If your prompt is vague, this guessing machine called ChatGPT or Gemini will produce guesses that are also vague. If you’re sharp and specific, you get sharp, targeted results.

“When your prompt is vague, this guessing machine called ChatGPT or Gemini will produce guesses that are also vague.”

Sharpening Prompts with AIM: Actor, Input, Mission

To master AI prompt examples, I use the AIM framework:

- Actor: Who is the AI acting as? (e.g., “You are a professional resume editor.”)

- Input: What are you giving it? (e.g., “Here is my resume.”)

- Mission: What do you want? (e.g., “Make it more concise and achievement-focused.”)

Real-life example: Early on, I asked ChatGPT, “Fix my resume.” The result? Generic fluff. But with AIM: “You are a senior recruiter. Here is my resume. Rewrite it to highlight leadership and measurable results.” The output was 10x sharper.

Why Vague Prompts Lead to Vague Answers—My Disaster Story

Once, I gave AI a prompt as vague as “help me with directions.” The result? A confusing mess—like asking a drummer to improvise without a beat. But when I learned to “play the notes” clearly, like in music, the AI started producing results that were crisp, focused, and actionable.

How Learning to Talk to AI Is Part Science, Part Music Lesson

Learning prompt engineering is like learning a new instrument. At first, it’s awkward. But as you master the rhythm of AIM, your outputs become 5-10x sharper. This is the difference between being lost in translation and becoming an AI whisperer.

Learning Deeply, Not Widely: The ‘Instrument’ Approach to Choosing AI Tools

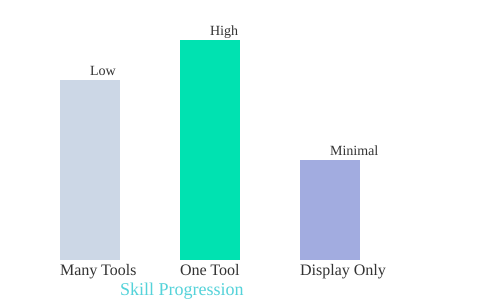

If you’re just starting your AI learning roadmap, you might be tempted to Google the “Top 50 AI Tools” and try them all. I get it—there’s a certain thrill in seeing what’s out there. But let me save you some time (and a lot of confusion): that’s the fastest way to burn out and stall your progress. The real AI roadmap for beginners is about depth, not breadth.

Why Tool-Hopping Leads to Overwhelm

Most people begin their AI journey by collecting tools like trophies. They skim through lists, pick a handful—maybe ChatGPT, Gemini, a few niche apps—and bounce between them. The result? Shallow understanding, scattered skills, and a sense that AI is just too much to handle. There’s simply too much out there to learn everything at once.

Pick Your ‘Instrument’—Then Go Deep

Here’s my advice: pick one AI model and dig in. Think of it like learning a musical instrument. You wouldn’t try to master the guitar, piano, and drums all at once. Instead, you’d pick one, practice daily, and get to know its unique feel. The same goes for AI tools. Whether you choose ChatGPT (the most mature), Gemini (great for Google ecosystem fans), or a cloud-based AI for business projects, the key is to master its quirks and strengths.

The Psychological Edge: Lessons from Drumming

Let me share a personal story. I spent over 10,000 hours as a drummer. When I decided to learn guitar, it wasn’t easy—but it wasn’t foreign, either. Why? Because my brain was already trained to see patterns, practice deliberately, and break down complex skills. There’s even research in frontier psychology showing that drummers pick up guitar faster than total beginners, even though the skills are different. This is called transfer learning, and it absolutely applies to AI.

“The deeper you dig into one foundational model, the faster you will find the rhythm of all the others.”

Week One Challenge: Find the Rhythm of Your AI Tool

For your first week, commit to a single AI tool. Spend time with it every day. Learn its personality, its cadence, its limits, and its strengths. Notice how it responds to different prompts. Where does it shine? Where does it stumble? The goal is to start feeling its rhythm—just like you would with an instrument.

- Pick one AI tool (ChatGPT, Gemini, or Cloud)

- Use it exclusively for all your AI projects this week

- Experiment with different types of prompts

- Document its quirks and your discoveries

From Comfort to Mastery—And Beyond

Once you’re comfortable with your chosen platform, you’ll notice something amazing: the skills and instincts you’ve built transfer easily to other AI tools. You’ll recognize structures, patterns, and workflows, making it much easier to branch out later. It’s the difference between playing instruments and simply collecting them for display.

Write Structured Prompts Effortlessly

By the end of the week, try using the AIM framework for your prompts. With enough focused practice, you’ll be able to write effective, structured prompts almost without thinking—just like a musician improvising after mastering their scales.

Bar chart: Focusing on one AI tool leads to higher skill progression than dabbling in many or just collecting tools.

Context is King: How to ‘Map’ Your Prompts for World-Class Results

Let me be blunt: the world’s smartest AI will sound clueless unless you feed it context. This lesson hit home for me early in my AI mastery roadmap. I remember asking a top-tier language model to “summarize this report,” only to get a generic, almost nonsensical answer. Why? I hadn’t given it the report—or any background. That’s when I realized: context isn’t optional, it’s everything.

Why Context Turns Clueless AI into Insightful AI

Every answer AI gives depends on how it understands your question. Without context, it has no grounding—no way to anchor its billions of numbers and patterns to your real-world needs. In my own AI learning roadmap, I saw that even the most advanced data science models flounder without the right setup. But add context, and suddenly the AI’s responses become sharp, relevant, and actionable.

The MAP Framework: Building a ‘Map’ for AI Navigation

To get world-class results, I use a simple but powerful framework: MAP. This stands for Memory, Assets, Actions, Prompt. Think of it as a GPS for your prompt engineering:

- M = Memory: The conversation history or notes from previous sessions. This is how you build continuity. I often re-paste earlier threads or ask the AI to summarize past chats before starting a new one. This way, the model remembers what matters and doesn’t start from scratch each time.

- A = Assets: These are the files, data, and resources you attach or paste into your prompt. When I feed the AI a spreadsheet, a PDF, or a code snippet, I’m grounding its output in my reality—not just the abstract world of its training data.

- A = Actions: These are the tools or commands the model can use, like searching the web, scanning your drive, writing code, or creating a Notion doc. By specifying actions, you let the AI do real work, not just talk about it.

- P = Prompt: This is your actual instruction. The clearer and more detailed your prompt, the better the AI’s reasoning and response.

How Continuity Shapes Smarter Conversations

Expert-level AI interaction hinges on rigorous context. By feeding the model previous chat history or asking for a summary, you create a thread of memory. This continuity means the AI can reference earlier insights, making each response more nuanced and accurate. In my experience, this single habit puts you in the top 10% of AI users.

Grounding Outputs with Real-World Assets

Whenever I want data science insights or need help with a project, I always attach relevant files or datasets. This grounds the AI’s reasoning, so its answers are tailored to my actual situation—not just a generic scenario. The difference in output quality is dramatic.

Action Commands: Letting AI Work for You

Don’t just ask questions—give the AI actions. Whether it’s “search the web for recent trends,” “write this Python function,” or “organize this data in a table,” action-based prompts unlock the model’s full potential.

Contextual Prompting vs. Bare Questions: Real Examples

Compare these two prompts:

- Bare: “Summarize this.”

- Contextual: “Using the attached Q2 sales report, summarize key revenue trends for a data science presentation. Reference our previous discussion on regional growth.”

The second prompt, rich with context, produces world-class results. That’s the power of MAP—and why context is the secret weapon in your AI mastery roadmap.

Debug Your Mind: From Prompting to Iterating Like an AI Engineer

My journey into AI mastery didn’t start with success. The first time I ever prompted an AI—one of OpenAI’s earliest models—I spent an entire day wrestling with it. The outputs were random, unpredictable, and left me questioning my own logic. I was frustrated, but back then, no one even used the phrase prompt engineering. I learned the hard way: prompting isn’t just typing; it’s iterating. Debugging your thinking is the real step four on the AI mastery guide.

"When the output is weak, I assume the fault is mine. Because it is."

This mindset shift was crucial. If I didn’t get the answer I wanted, I stopped blaming the AI. Instead, I started debugging my own instructions. Did I set the right persona? Did I provide enough context? Was my goal clear? Sometimes, I’d even ask the model itself: What did you do and why did you choose that answer? To my surprise, it would explain its logic—revealing its internal chain of thought. That’s when the magic started. I wasn’t just using AI; I was learning how it thinks.

From Frustration to Feedback Loops: Three Iterative Patterns

Over time, I developed three “cheat codes” that turned awkward AI interactions into mutual lessons. These patterns are the backbone of AI best practices—they transform you from a consumer into a collaborator.

-

Chain of Thought Pattern

When an answer feels off, I prompt:Think step by step. Show your reasoning. Then give me the final concise answer.This surfaces the AI’s logic, making its process transparent. For example, if I ask for a business strategy and the answer seems shallow, I’ll use this pattern to see each step the AI takes, and spot where things go astray. -

Verifier Pattern

Sometimes, the problem is my own unclear intent. Here, I tell the AI:Ask me three questions that would clarify my intent to you. Ask them one at a time.The AI becomes an active partner, probing for details before trying again. This pattern is invaluable for complex tasks—like writing a nuanced article or generating code—where precision matters. -

Refinement Pattern

This one is about sharpening my own prompts. I’ll say:Before answering, propose two sharper versions of my question. Ask which one I prefer.The AI helps me ask better questions, refining the input before generating an output. It’s like having a coach who teaches me to communicate more clearly.

Iteration: The Heart of Prompt Engineering

Each of these patterns is a feedback loop. I test, tweak, tune-up, and push—just like debugging code or practicing music. Every “bad” answer is a clue about my own communication. When I ask the AI to explain itself, it surfaces its logic for me. When I clarify my intent, it learns to understand me better. And when I refine my questions, I learn to think more clearly.

Eventually, it clicks. I’m not just talking at AI—I’m having a real conversation. The unexpected joy is in pushing both the AI and myself to think better. This is the essence of prompt engineering and the foundation of true AI mastery in 2025.

Steering Toward Expertise: Channeling AI’s Brilliance Without Echo Chamber Traps

When I first started using large language models for my AI projects, I made a rookie mistake: I typed in a vague prompt for a LinkedIn post—something like, “Share tips for team innovation.” The result? A bland, buzzword-heavy answer that could have been ripped straight from a generic Wikipedia article. It was so forgettable, I almost posted it by accident. That’s when I realized: generic prompts yield generic answers. If you want AI implementation to stand out, you have to steer the model toward expertise, not mediocrity.

Why Generic Prompts Fail: My LinkedIn Post Disaster

When you ask ChatGPT or any large language model a broad question, you’re not searching a database of facts. Instead, you’re sampling from millions of ideas—some brilliant, some average, some completely made up. Vague prompts like “How do I make my team more innovative?” will always return surface-level advice. I learned this the hard way when my post flopped, filled with clichés and empty jargon.

The ‘Expert-Rich Prompt’ Trick

Here’s the game-changer: invoke specific experts, frameworks, or research in your prompts. Instead of asking for generic advice, I now say:

Explain how to make a team more innovative using ideas from Pixar’s brain trust, Satya Nadella’s strategy, and Harvard’s research.

This approach pulls the AI away from the middle and toward the sharper edges of its “brain.” By referencing experts, you nudge the model to synthesize deeper, more original content—turning AI from a noise machine into a curator of wisdom. This is one of the most effective AI best practices I’ve discovered for both personal learning and technical AI implementation.

Step-by-Step: From Experts to Original Frameworks

- Ask AI to list current experts, researchers, and key papers in your topic area. Example: “List the top experts and research papers on black holes.”

- Feed those names and sources back into the model with a prompt like, “Using these experts, synthesize an original framework that fills a current gap in black hole science.”

- Review and refine the output, pushing for more depth or clarity as needed.

This method ensures your AI projects tap into the latest thinking, not just recycled internet wisdom.

Safeguard: Always Verify Facts

Here’s the catch: AI can sound convincing even when it’s confidently wrong. I’ve seen models claim “68% of Americans are getting divorced”—a made-up statistic delivered with total authority. That’s why fact-checking is a must in any AI implementation.

The Five-Point Critique: Separating Intelligence from Illusion

To avoid echo chamber traps, I use this five-step verification process for every major AI output:

| Step | Description | Example/Note |

|---|---|---|

| Assumptions | Ask AI to list every assumption made and rank by confidence. | Clarifies hidden logic. |

| Sources | Request two independent sources for each major claim, including title, URL, and a one-line quote. | Check for reliability. |

| Counter-evidence | Find a credible source that disagrees and explain dependencies. | Reveals depth of reasoning. |

| Auditing | Ask AI to recompute figures and show math or code. | Numbers often change on review. |

| Cross-model verification | Run the same prompt in ChatGPT, Gemini, and Claude; have models critique each other. | Spot inconsistencies and hallucinations (e.g., “68% of Americans are getting divorced”). |

By steering AI toward expert sources and rigorously verifying its output, you unlock its true potential—moving beyond blandness to genuine insight for your AI projects.

Developing Your Own Taste: Sparring With AI and the OCEAN Framework

Most people use AI like a vending machine. They push a button, grab the same junk food output everyone else gets, and call it a day. But if you’re reading this, you’re already past that stage. You’re in week four of your AI mastery roadmap, and it’s time to step into the ring. Treat AI like your sparring partner. This is where real AI adoption begins—by moving beyond copy-paste and developing your own taste.

Moving Beyond Vending Machine AI

Spotting cookie-cutter outputs is the first skill to master. If your AI-generated content sounds generic, chances are, it is. Anyone can spot a bland, recycled answer. The key to AI best practices is discernment: Is the output original, insightful, and personalized? If not, don’t settle. Push for more. This is how elite users stand out—they refuse to accept the first, easy answer.

Treating AI as a Sparring Partner

Imagine AI as your debate opponent, not just a tool. Argue with it. Challenge its logic. Ask it to defend its choices. When you push back, you sharpen both your thinking and the AI’s. This back-and-forth is where your unique voice starts to emerge. You’re not just accepting answers; you’re shaping them, making each output a reflection of your own perspective.

Building Your Unique Voice

When does an AI-generated idea actually become yours? It’s when you’ve interrogated the response, challenged its assumptions, and demanded more depth. By the fourth week of your AI adoption journey, outputs should sound like you, not just the collective internet. This is the moment you stop being a passive consumer and start becoming an AI whisperer.

The OCEAN Framework: Demanding Depth

To avoid generic answers and cultivate real insight, I use the OCEAN Framework. This is my go-to method for turning bland outputs into something truly original:

- Originality: Is there a non-obvious idea in the response? If not, ask for more.

- Contrast: Does the answer challenge common wisdom or offer a surprising comparison?

- Edge: Is there a bold, risky, or controversial element?

- Angle: Does the response approach the topic from a unique perspective?

- Novelty: Is there something here you haven’t seen before?

When I get a bland answer, I use prompts like:

Give me three angles no one else has thought about. Label one as risky.

This wild card test forces AI to dig deeper, surfacing ideas that are fresh and sometimes even risky. That’s where the magic happens.

Creative Failures and ‘Losing Arguments’

Don’t be afraid to lose arguments with your AI sparring partner. Sometimes, the best insights come from creative failures—when the AI surprises you or even outsmarts you. Embrace these moments. Each debate, each pushback, is a step toward developing your own taste and mastering the art of AI best practices.

Remember, the goal is not to sound like everyone else. The goal is to make AI outputs reflect you—your thinking, your style, your edge. That’s the heart of the AI mastery roadmap.

Charting the Unbeaten Path: Next Steps for Future-Proof AI Mastery

How to Keep Leveling Up: Continuous Learning, Networking, and Portfolio Building

If you want to truly transform your AI career, you can’t just follow the crowd. I’ve learned that continuous learning, active AI networking, and hands-on portfolio building are the three pillars that separate future-proof AI professionals from the rest. Here’s my logic:

- Continuous learning keeps your skills sharp as the field evolves.

- Networking opens doors to mentorship and hidden job opportunities.

- Portfolio building proves your expertise to employers and collaborators.

For example, when I contributed to an open-source MLOps project last year, not only did my technical skills grow, but I also connected with a mentor who later referred me for a contract role. This is why I assert: active engagement beats passive study every time.

Why AI Governance, Ethics, and Privacy Matter as Much as Technical Know-How

It’s no longer enough to just code a model. In 2025, AI governance, ethics, and privacy are just as critical as technical skills. According to industry roadmaps, companies now demand professionals who can navigate responsible AI deployment. Consider the real-world example of the EU’s AI Act, which is already reshaping hiring criteria for AI roles in Europe.

If you ignore these areas, you risk becoming obsolete. But if you master them, you’ll be in demand for roles like AI Ethics Officer or AI Governance Lead—positions that didn’t even exist five years ago.

Quick Look: MLOps, Multi-Agent Systems, Retrieval-Augmented Generation, and Tomorrow’s Wild Cards

Let’s get concrete. Here’s a snapshot of the most in-demand AI skills for 2025, based on current industry data:

| Skill | Industry Demand (2025) |

|---|---|

| MLOps | Very High |

| Agentic AI (Multi-Agent Systems) | High |

| AI Governance & Privacy | High |

| Retrieval-Augmented Generation | Emerging |

(Source: First AI Movers, Krishnaik06 AI Roadmap)

I recommend picking one “wild card” skill—like multi-agent systems—and building a project around it. This signals to employers that you’re not just current, but ahead of the curve.

Staying Ahead: Real-World Projects and AI Certifications

'Practical experience through real-world projects and portfolio building is recommended for career advancement in AI.'

I stand by this: certifications like TensorFlow Developer or Microsoft’s AI Engineer are valuable, but they shine brightest when paired with real deployments. For instance, after earning my AWS Machine Learning certification, I built a privacy-compliant chatbot for a healthcare startup. That project became the centerpiece of my portfolio—and the reason I landed my next job.

Connecting with Communities: Accountability, Mentorship, and Secret Opportunities

AI networking isn’t just about LinkedIn connections. I’ve found the most growth through active communities—think Kaggle, local AI meetups, or Discord servers. These spaces offer:

- Accountability partners for your learning goals

- Mentors who provide honest feedback

- Access to “hidden” job leads and collaboration offers

If you’re serious about AI career transformation, embed yourself in these circles. The secret opportunities you’ll uncover are often the ones that never get posted on job boards.

Frequently Asked Questions: Wild Cards and Tangents

Let’s get real for a moment. If you’re following this AI roadmap for beginners, you’re probably swimming in questions that don’t show up in the usual tutorials. I’ve been there, too—staring at my screen, wondering if I was missing some secret handshake or hidden formula. Here’s the truth: your quirks, doubts, and even your wildest tangents are the real fuel for your AI career transformation. Let’s dive into the questions that keep popping up, the ones that make you pause and think, “Is this just me?” Spoiler: it’s not.

Is there really a ‘best’ AI tool to start with—or is it all hype?

When I started, I spent weeks obsessing over which tool would give me a head start. Python, ChatGPT, Midjourney, AutoML—the list felt endless. Here’s what I learned: there is no perfect tool. The “best” AI tool is the one you’ll actually use. Pick something that excites you, even if it’s just a chatbot or a simple image generator. The magic isn’t in the platform; it’s in the practice. Every prompt you write, every revision you make, you’re not just training the model—you’re training yourself. That’s the real AI project that matters.

What if I’m bad at math? Can I still master AI?

This is the big one. I used to think my rusty algebra skills would hold me back. But here’s the secret: AI today is more about curiosity and creativity than calculus. Most modern tools hide the math behind user-friendly interfaces. If you can ask questions, spot patterns, and experiment, you’re already on the right path. Getting personal with your AI journey breaks down more barriers than expertise or credentials alone. So, don’t let math anxiety stop you—let your curiosity lead instead.

How do I keep my AI outputs sounding fresh, not robotic?

After a few weeks, it’s easy to fall into the trap of copy-paste prompts and bland results. I call this “AI creative burnout.” The fix? Add your own flavor. Use the “ocean framework”—mix in your personal stories, odd metaphors, or even a joke. Challenge the AI with wild cards: “Write this as if you’re a pirate,” or “Explain it to a five-year-old.” The more you play, the more your outputs will sound like you, not a machine.

What’s the best way to regain control after AI gives a bizarre answer?

We’ve all seen it: AI goes off the rails, spitting out something totally unexpected. My advice? Don’t panic—get curious. Ask yourself, “What did I actually ask for?” Then, tweak your prompt, clarify your intent, or even ask the AI to explain its reasoning. Remember, every odd answer is a chance to learn. Over time, you’ll get better at steering the conversation back on track.

Should I be worried about AI making things up—and how do I spot hallucinations?

Absolutely. As the saying goes,

“AI will sound just as confident when it’s wrong as when it’s right.”Always double-check facts, especially if you’re using AI for research or decision-making. Cross-reference with trusted sources. If something feels off, it probably is. Trust your instincts and verify everything important.

How important is building an AI portfolio compared to certifications?

In my experience, a portfolio of real AI projects speaks louder than any certificate. Employers and collaborators want to see what you’ve built, not just what you’ve studied. Start small—share your experiments, document your process, and let your journey shine. Your unique perspective is your greatest asset on the unconventional roadmap to AI mastery in 2025.

Remember, AI is not here to replace human work—it’s here to restore human worth. Embrace your questions, follow your tangents, and let your curiosity be your guide. That’s how you unleash your inner AI whisperer.

TL;DR: Forget generic AI advice: master the language, context, and mindset of AI in seven unpredictably personal steps, and you’ll leapfrog the crowd in just 30 days.

Post a Comment