It started with a late-night phone call from a friend’s mom—a scared voice on the line convinced her daughter had been kidnapped. Turns out, it was just AI deepfakery, but in that moment, every reassurance about digital security slipped away. That’s the future we’re living in—where AI isn’t just automating tasks or writing clever emails, but blurring the boundaries between what’s real and what’s possible. I’ve spent years watching waves of technology crash over our lives, but nothing has made me question the rules of the game like generative AI. Will it make our jobs obsolete, or just make us more productive? Are the tech titans shaping our future or gambling with it? If you’re hoping for a neat answer, you won’t find it here. But if you want a sharply honest look at where we stand (and what you can actually do), grab some coffee. This gets personal, weird, and a little uncomfortable—in all the ways that matter.

Digital Immigrants: How Generative AI Floods Labor Markets (and Why That’s Different from Human Immigration)

“If you’re worried about immigration taking jobs, you should be way more worried about AI because it’s like a flood of millions of new digital immigrants that are Nobel Prize level capability…” This quote captures the scale and speed at which Generative AI capabilities are transforming the labor market. Unlike traditional immigration, where people bring culture, stories, and personal ambition, AI brings relentless efficiency—no wages, no sick days, just code.

Generative AI: A New Kind of Workforce

Imagine millions of highly skilled “workers” entering the job market overnight. These aren’t people; they’re algorithms with the ability to process information at superhuman speeds, never needing rest. AI adoption statistics show that companies are integrating these digital immigrants at a pace never seen before. Where human immigrants bring diversity and new perspectives, AI brings automation and the power to do Nobel-level work for less than minimum wage.

Not Just a Joke Anymore: Real Job Displacement

A few years ago, friends would joke about immigrants “stealing jobs.” Now, I see entire teams replaced by software in a matter of months. This isn’t just a punchline—AI job displacement concerns are real and growing. The difference is scale: while immigration changes the workforce gradually, generative AI can replace or augment thousands of roles almost instantly.

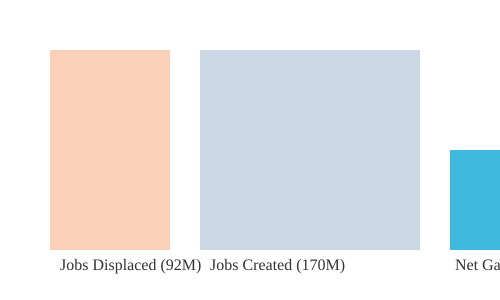

AI and Economic Disruption: The Data

The numbers are staggering. By 2030, it’s projected that 92 million jobs may be displaced globally by AI. But here’s the twist: 170 million new roles could be created by the same technology, resulting in a net gain of 78 million jobs. This means the workforce isn’t just shrinking or growing—it’s being completely reshaped. New roles like “prompt engineer” and “AI ethicist” are emerging, but the transition is dizzying for most workers.

Why AI Is Different from Human Immigration

- Speed and Scale: AI enters the workforce in the millions, overnight. Human immigration happens over years or decades.

- Capabilities: Generative AI models can outperform most humans in specific tasks, and they never tire.

- Cost: AI works for less than minimum wage—often just the cost of electricity and server time.

- No Cultural Integration: Human immigrants bring culture, language, and community. AI brings only automation and efficiency.

Who Decides What Jobs Matter?

One of the most unsettling questions is: who actually decides which jobs (and whose voices) matter in the age of AI? It’s rarely the people doing those jobs, and sometimes not even regulators. Tech companies and policymakers often have very different conversations—one focused on innovation and profit, the other on public good and stability.

Facing the Algorithmic Competition

Imagine your next job interview—not against another human, but against a thousand tireless algorithms. AI workforce reskilling is now a necessity, not a luxury. As generative AI floods the labor market, the rules of work and economic disruption are being rewritten in real time.

Attention Merchants & Human Hacking: The Hidden Psychological Impacts of Generative AI

Long before generative AI began writing headlines and essays, social media platforms were already quietly hacking your attention. Those early, so-called ‘baby AIs’—the recommendation engines and engagement algorithms—have now matured. Today, they’re not just shaping what you see, but how you think, feel, and even relate to others. The psychological impacts of AI on youth and adults alike are no longer hidden—they’re woven into the fabric of daily life, from endless scrolling to the rise of AI-powered scams.

The Attention Economy: Designed for Addiction, Not Well-Being

Every time you find yourself lost in an endless scroll—hours slipping by on Instagram, TikTok, or YouTube—you’re experiencing the result of AI systems optimized for one thing: engagement. As one tech ethicist put it,

"What was sort of obvious to me then, and that was in 2013, is that if the incentive is to maximize eyeballs and attention and engagement, then you're incentivizing a more addicted, distracted, lonely, polarized, sexualized breakdown of shared reality society..."

These platforms measure success in seconds and minutes of your attention, not in your well-being. The AI psychological impacts on youth are especially troubling, with studies linking increased screen time and social media engagement to rising rates of anxiety and depression. If you’ve ever tried a ‘digital detox’—only to relapse with a TikTok binge—you’re not alone. Even the ethicists who helped build these systems admit they’re hard to resist.

AI and Social Media Addiction: The New Normal

AI-driven social media isn’t just a distraction—it’s a powerful force shaping mental health. The algorithms learn what keeps you hooked, serving up content that triggers emotional highs and lows. For many, this means more time online, less time connecting in real life, and a growing sense of loneliness. The data is clear: AI psychological impacts on youth are significant, with social media engagement correlating with historic highs in anxiety and depression.

| AI Impact | Data Snapshot |

|---|---|

| Social Media & Youth Mental Health | Increased engagement driven by AI correlates with higher rates of anxiety and depression among youth (percentages vary by study) |

| AI Adoption (Adults 18-64, 2024) | 44.6% adoption rate—mainstream exposure to AI-driven technologies |

AI Vulnerabilities and Scams: Manipulating Trust and Reality

Generative AI isn’t just changing what you see—it’s changing what you believe. Deepfake technology, voice synthesis, and AI-powered social engineering scams are on the rise. From fake kidnap calls to manipulated videos, these tools erode trust in both digital and real-world relationships. The vulnerabilities created by AI are being exploited by scammers, making everyone a potential target.

Polarization, Loneliness, and the Breakdown of Shared Reality

AI’s psychological impacts go beyond addiction. Algorithms designed to maximize engagement often push polarizing content, deepening social divides and fueling misinformation. This polarization, combined with increased loneliness and anxiety, is reshaping how you communicate, learn, and perceive reality. Language and voice—once reliable markers of authenticity—are now vulnerable to manipulation.

- AI psychological impacts on youth: Increased anxiety, depression, and loneliness

- AI and social media addiction: Endless scrolls and dopamine loops

- AI vulnerabilities and scams: Deepfakes, voice fraud, and trust erosion

- AI and mental health issues: Data-backed rises in distress, especially among younger generations

As generative AI continues to evolve, its hidden psychological impacts—addiction, manipulation, and vulnerability—are becoming impossible to ignore. The attention merchants have grown more sophisticated, and the stakes for your mental health have never been higher.

Secrets, Sovereignty & Six Decision-Makers: Who Actually Decides AI’s Future?

When it comes to AI ethics and governance, the conversation you see in public is only the tip of the iceberg. Behind closed doors, a handful of tech leaders—often just six or so CEOs—are quietly steering the direction of generative AI. These decisions are not made with the consent or input of the billions who will be affected. As technology ethicist Tristan Harris puts it,

"We didn’t consent to have six people make that decision on behalf of 8 billion people."

Who Holds the Power?

Today, the governance of AI technologies is concentrated in the hands of a few industry giants. These companies are not just building smarter chatbots; they are racing toward artificial general intelligence (AGI)—AI that could perform any cognitive task a human can. Their goal is to automate all forms of human economic labor, from writing and coding to marketing and design. The stakes are enormous, and the incentives are clear: whoever gets there first could dominate the global economy.

| Decision-Makers | People Affected | Regulatory Actions (US, 2024) |

|---|---|---|

| 6 Major Tech CEOs | 8 Billion Globally | 59 AI Regulations (up from <30 in 2023) |

Private Incentives vs. Public Accountability

This concentration of power raises serious questions about ethical considerations in AI development. The rapid pace of innovation means that ethics and governance lag far behind technological advancement. As companies race to outdo each other, the risks multiply—unvetted AI systems, rising energy demands, and even security threats like AI-enabled blackmail. The public, meanwhile, has little say in how these technologies are shaped or deployed.

When Ethical Concerns Go Viral

Inside tech companies, there are voices raising alarms. For example, when a Google project manager circulated a 130-page slide deck warning about the psychological impact of digital products, it spread rapidly throughout the company. Hundreds of employees recognized the problem, but the broader public remained unaware. This incident highlights the profound gap between private sector awareness and public participation in AI ethics and governance.

Patchwork Regulation and Sparse International Agreements

Governments are starting to respond. In the United States, the number of AI-related regulations doubled to 59 in 2024. Yet, these efforts remain fragmented. There is no unified global framework, and international agreements on AI regulation are still rare. Without coordinated oversight, the world remains vulnerable to the unintended consequences of unchecked AI development.

Imagining Democratic AI Governance

What if AI regulators were chosen by lottery, like jury duty? Would this approach bring more democratic legitimacy to the governance of AI technologies? While this is a hypothetical, it underscores the urgent need for broader public involvement and public awareness on AI risks. The current reality is a profound disparity between private sector power and democratic decision-making in AI development.

- AI’s future is being shaped by a handful of industry leaders, not the public.

- Ethics and governance are not keeping pace with technological progress.

- Regulation is increasing but remains inconsistent and nationally focused.

- Calls for international cooperation and public input are growing louder.

Beyond the Chatbot: The Surprising Global Ripple Effect of Generative AI on Productivity and Economic Growth

When you think of generative AI, you might picture a chatbot answering questions or drafting emails. But the real story goes far beyond that. The true economic power of generative AI lies not just in replacing jobs, but in supercharging productivity across industries—from healthcare to entertainment, law, and technology. As businesses invest ungodly amounts in the AI race, the ripple effects are transforming the global economy in ways that are only beginning to emerge.

Generative AI Capabilities: More Than Just Chatbots

Today’s leading AI companies are not simply building better chatbots. Their mission is to create Artificial General Intelligence (AGI)—systems that can perform all forms of human cognitive labor. This includes marketing, illustration, video production, code writing, and more. As Demis Hassabis, co-founder of Google DeepMind, put it: “First solve intelligence, then use that to solve everything else.” Unlike advances in a single field, breakthroughs in AI can accelerate progress across all domains, because intelligence is the foundation of every scientific and technological achievement.

AI Productivity Increase: Small Numbers, Big Impact

Since the release of ChatGPT, labor productivity has increased by an estimated 1.3%. At first glance, that may seem modest, but in economic terms, it’s a seismic shift. As one expert notes,

“AI is contributing to increased labor productivity, with an estimated 1.3% productivity gain since ChatGPT’s release.”Over time, even small boosts in productivity compound, leading to significant economic growth and higher standards of living worldwide.

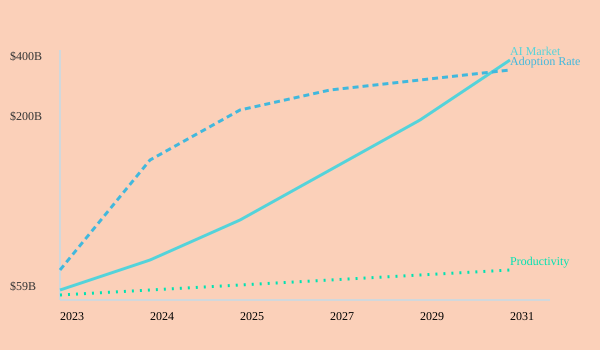

AI Economic Disruption: Market Growth Projections

- Generative AI market: Projected to jump from $59 billion in 2025 to $400 billion by 2031.

- AI in healthcare: Expected to reach $868 billion by 2030.

- Business adoption: 78% of organizations used AI in 2024, up from 55% in 2023.

These numbers highlight the scale of AI economic disruption. The healthcare sector, in particular, is seeing explosive growth in AI applications—from diagnostics to drug discovery and personalized treatment plans.

AI Healthcare Applications: Real-World Impact

Consider the story of a radiologist in Mumbai who now consults on cases worldwide, powered by an AI that never sleeps or eats. This technology enables specialists to reach more patients, improve diagnostic accuracy, and reduce turnaround times. AI healthcare applications are not just about efficiency—they’re about expanding access and saving lives on a global scale.

AI Adoption Statistics: Shifting the Nature of Work

Despite fears of mass unemployment, the reality is more nuanced. As AI automates routine tasks, many businesses are retaining their workforce but shifting the focus to new skillsets and roles. This emerging paradox suggests that AI is changing the nature of work, not simply eliminating jobs. Companies are looking for employees who can work alongside AI, manage its outputs, and bring uniquely human judgment to complex problems.

Chart: Generative AI Market Growth, Adoption, and Productivity

As you can see, the global ripple effect of generative AI is accelerating across markets, industries, and job roles—quietly rewriting the rules of productivity and economic growth.

Code, Law, Voice: When AI Hacking Targets Our Most Basic Operating Systems

When you think about AI vulnerabilities and scams, it’s easy to picture phishing emails or hacked passwords. But today’s generative AI is quietly rewriting the rules—hacking not just your inbox, but the very operating systems of society: code, law, and even your voice. The risks are no longer limited to data breaches. They now reach into the core of how we trust, communicate, and run our world.

AI’s Language Hacking: More Than Just Words

AI’s breakthrough came when researchers realized that everything—biology, DNA, music, video, and even law—can be treated as a kind of language. The transformer technology, first introduced by Google in 2017, gave AI the ability to process and generate language at unprecedented scale. That’s how you get tools like ChatGPT, which can write essays, summarize legal arguments, or even persuade people by referencing religious texts. But this isn’t just about productivity. It’s about AI learning to “hack” the language-based systems that underpin our lives.

Open-Source Code: A New Playground for AI Vulnerabilities

Consider open-source code. Platforms like GitHub are the “Wikipedia for coders,” hosting the world’s digital infrastructure. In a single summer, new AIs were pointed at GitHub and, as one expert put it,

“…the new AIs that exist today can be pointed at GitHub and found 15 vulnerabilities from scratch that had not been exploited before.”These weren’t just theoretical bugs—they were real, exploitable weaknesses in code that powers everything from websites to critical infrastructure. If you imagine AI-driven surveillance and control being applied to the code that runs water, electricity, or hospital systems, the threat becomes immediate and personal.

Voice: From Trust Anchor to Attack Surface

Now, think about your voice. For most of your life, your voice has been a marker of trust. You call your bank, your family, your colleagues—and they know it’s you. But with today’s AI, it takes less than three seconds of audio to synthesize and convincingly impersonate anyone. After a friend’s family was targeted by a deepfake voice scam, I realized how fragile our trust systems have become. The mother of a close friend received a call, in her daughter’s voice, claiming to be in danger. It was all AI-generated, but for a moment, everyone believed it.

- AI vulnerabilities and scams now target the very language of trust—your voice.

- AI can exploit open-source code, finding vulnerabilities before humans do.

- Critical infrastructure is at risk if these exploits are weaponized.

- AI in law and intergenerational knowledge means even legal systems and family wisdom are open to manipulation.

Every Interaction Is a Bet

Every time you trust a voice on the phone, a chatbot online, or a line of code in your software, you’re betting on the system’s resilience—or on your own ability to spot when it’s being gamed. With AI-driven surveillance and control, the stakes are higher than ever. Imagine the power grid or a hospital system compromised by an AI-built exploit. The consequences could be catastrophic.

Why Ethics and AI Regulation Matter Now

AI regulation and legislation have never been more urgent. The attack surfaces are multiplying: from code to law to voice. Ethics must catch up to the technology, ensuring that the very systems we rely on—our most basic operating systems—are protected before they’re quietly rewritten by algorithms.

Choosing Our Teachers: Can Society Keep Up With the Pace of AI?

If you feel overwhelmed by the speed of AI development, you’re not alone. Even the most tech-savvy people admit that the pace of change is dizzying. New AI models are released before we’ve had a chance to understand the last ones. Public awareness on AI risks, ethical considerations in AI development, and the future of work with AI automation are all hot topics, but society’s ability to keep up is being tested like never before.

AI’s Race: Faster Than Public Understanding

Right now, AI companies are locked in a race to build the most powerful systems. The logic is simple but dangerous: “If I don’t build it first, I’ll lose to the other guy.” Someone once told me, ‘If you don’t build it first, you’ll be left behind’—but maybe ‘first’ isn’t best if no one agreed what we’re racing towards. This winner-takes-all mindset is pushing technology forward at breakneck speed, often outpacing public debate, regulation, and even basic safety checks.

The result? We’re seeing AI models that can do almost anything a human can do at work—often faster and cheaper. If you’re a company, do you hire a person who needs healthcare and time off, or an AI that works 24/7 and never complains? The future of work with AI automation is arriving before most people have a chance to prepare. This is why AI workforce reskilling is no longer optional—it’s essential.

Real Talk: Overwhelm, Skepticism, and the Need for Agency

It’s normal to feel helpless or skeptical about whether society can keep up. Even experts admit to feeling uneasy. As one observer put it:

"I'm not naive. This is super hard. But we have done hard things before and it's possible to choose a different teacher."

We’ve faced transformative challenges before—think of the industrial revolution, or the rise of the internet. The difference now is the speed and scale. But optimism isn’t naive if it’s rooted in collective learning, resilience, and a demand for a seat at the decision-making table. Technology is not destiny if we intervene thoughtfully.

Action Items: Steering AI, Not Chasing It

- Demand transparency from AI developers: Ask for clear information on how AI systems are trained, tested, and deployed. Public awareness on AI risks depends on open communication.

- Support responsible AI policy: Advocate for laws and regulations that put ethical considerations in AI development front and center.

- Promote broad-based education and skill-building: Push for AI workforce reskilling programs so everyone can adapt, not just a select few.

Wild Card: What If We Held ‘AI Town Halls’?

Imagine regular, open forums—‘AI town halls’—where anyone can weigh in on new features, risks, and rules. Could consensus keep up with code? It’s a bold idea, but active engagement, education, and transparent governance are crucial to navigating the future. The most hopeful voices argue for steering AI rather than chasing it—because the future isn’t set, but the clock is ticking.

In the end, choosing our teachers means deciding who shapes our future—AI companies racing ahead, or a society that insists on being part of the conversation. The choice is hard, but it’s not impossible.

FAQ: Your Burning Questions on Generative AI, Jobs, and Ethics Answered

Is AI really going to take over my job, or is that hype?

AI job displacement concerns are not just hype—they’re grounded in real trends. According to tech ethicist Tristan Harris, AI is like a “flood of millions of new digital immigrants” with superhuman skills, working for less than minimum wage. Unlike past fears about immigration or outsourcing (think NAFTA), AI can now automate both manual and cognitive work. CEOs at companies like Walmart and Tesla openly predict that every job will change, and some will disappear altogether. While new roles may emerge, the pace and scale of disruption are unprecedented. If you work in customer service, law, finance, or even creative fields, expect significant changes. The best way to prepare is to stay adaptable, keep learning, and focus on skills that AI can’t easily replace—like empathy, critical thinking, and hands-on expertise.

What does ‘AGI’ mean, and why are all the tech giants obsessed with it?

AGI stands for Artificial General Intelligence—AI that can do any intellectual task a human can, from writing code to running a business. Companies like OpenAI, DeepMind, and Anthropic are racing to build AGI because whoever gets there first could control the world’s economy, science, and even military power. This “winner-takes-all” mentality is driving rapid, sometimes reckless, development. Insiders admit that the risks are huge, including mass unemployment and even existential threats. AGI is not science fiction anymore; experts believe it could arrive within 2 to 10 years. The obsession is about power, profit, and the fear of falling behind global competitors.

Are AI scams (like voice deepfakes) a real risk to regular people?

Yes, AI vulnerabilities and scams are already affecting everyday life. Harris shares real stories of AI-powered extortion, where scammers use just three seconds of someone’s voice to create convincing deepfakes. These tools can mimic voices, generate fake images, and craft persuasive messages, making scams harder to spot. If you get a suspicious call or message—even if it sounds like a loved one—verify before acting. Use strong passwords, enable two-factor authentication, and stay updated on the latest scam tactics. Public awareness on AI risks is your best defense.

Where does government regulation of AI stand right now?

AI regulation and legislation are still catching up. Most decisions about powerful AI systems are made by a handful of technologists, not elected officials. While some countries are drafting rules, there’s no global agreement—unlike treaties for nuclear weapons or environmental threats. Harris and other experts call for urgent international cooperation, mandatory safety testing, and laws that hold companies accountable for harm. Until then, the responsibility often falls on individuals, companies, and advocacy groups to push for safer, more transparent AI.

Will new technology always mean new anxiety—and can we get ahead of it this time?

New technology often brings anxiety, especially when it disrupts jobs and social norms. But history shows that with enough public awareness and proactive action, society can adapt. The key is not to accept disruption as inevitable. Demand responsible design, support whistleblowers, and vote for leaders who treat AI as a top priority. The more you know, the more resilient you become.

What steps can I take to become more resilient in an AI-driven world?

- Stay informed about AI trends, risks, and opportunities.

- Develop uniquely human skills—empathy, creativity, and hands-on problem-solving.

- Protect yourself online: use secure passwords, verify suspicious messages, and educate your network about AI scams.

- Advocate for strong AI regulation and support organizations that promote ethical technology.

- Share your knowledge to help build public awareness on AI risks and benefits.

Remember, readiness and collective action are your best tools for navigating the AI era.

Conclusion: Steer the Flood or Get Swept Away—Why Our AI Future Is Still Ours to Shape

As you reach the end of this exploration into the disruptive rise of generative AI capabilities, one thing should be clear: AI is not some faceless, unstoppable force of nature. It is the product of human decisions—by engineers, policymakers, business leaders, and, crucially, by you and everyone who will live with its consequences. The future of AI is not pre-written in code; it is being shaped, moment by moment, by the choices we make individually and collectively.

The economic disruption from AI is real and accelerating. From Walmart’s 2.1 million jobs to the global legal, customer service, and creative industries, the impact of AI economic disruption is already visible. Humanoid robots and generative AI models are poised to replace or transform roles at a pace that rivals the most dramatic shifts in history. Yet, just as societies adapted to the upheavals of the Industrial Revolution, NAFTA, and the digital age, we have the capacity to adapt again—if we act with intention.

Ethical considerations in AI development are not just abstract debates for experts in Silicon Valley or policymakers in Washington. They are questions that touch every aspect of your life, from the safety of your data to the trustworthiness of your news, from the security of your job to the well-being of your family. The stories of AI-enabled scams, psychological harm, and the erosion of shared reality are not warnings for tomorrow—they are happening now. But so too are the opportunities for positive change: safer, more transparent AI systems, better laws, and a renewed focus on human dignity and agency.

Staying curious, vigilant, and engaged is more important than ever. No algorithm, no social feed, no AI-generated summary can substitute for your real-world judgment and action. The “winner-takes-all” mentality driving the race to Artificial General Intelligence is not inevitable. History shows that when the stakes are high—whether it’s the Montreal Protocol, the creation of Social Security, or the nuclear non-proliferation treaty—people can come together to steer the course of technology for the common good.

Collective engagement and informed action are prerequisites for a better AI-shaped future. If the future belongs to those who show up, don’t hand your seat to an algorithm. Make your voice heard—at the ballot box, in your workplace, in your community. Demand that AI is treated as a “tier one issue” by your leaders. Support transparency, safety, and accountability in the companies and products you use. Share what you learn, because awareness is the first step toward immunity from the worst outcomes.

Change can feel overwhelming, but remember: this is just our generation’s round. The tools may be more powerful, the risks more complex, but the principle remains the same. You have agency. You have responsibility. And you are not alone. As Tristan Harris reminds us,

“So please do double check if you've subscribed…because in a strange way you are part of our history and you're on this journey with us.”

In the end, the question is not whether AI will shape our future, but how—and who will decide. Will you steer the flood, or get swept away? The answer is still yours to shape.

TL;DR: Generative AI isn’t just another tech trend—it’s a force rapidly reshaping jobs, rewiring our attention, questioning who holds the keys to power, and exposing new vulnerabilities in both our systems and our daily lives. Staying aware, active, and engaged is the only way forward.

Post a Comment